What Every Developer Team Needs to Know Before It Becomes a $9.8 Million Problem

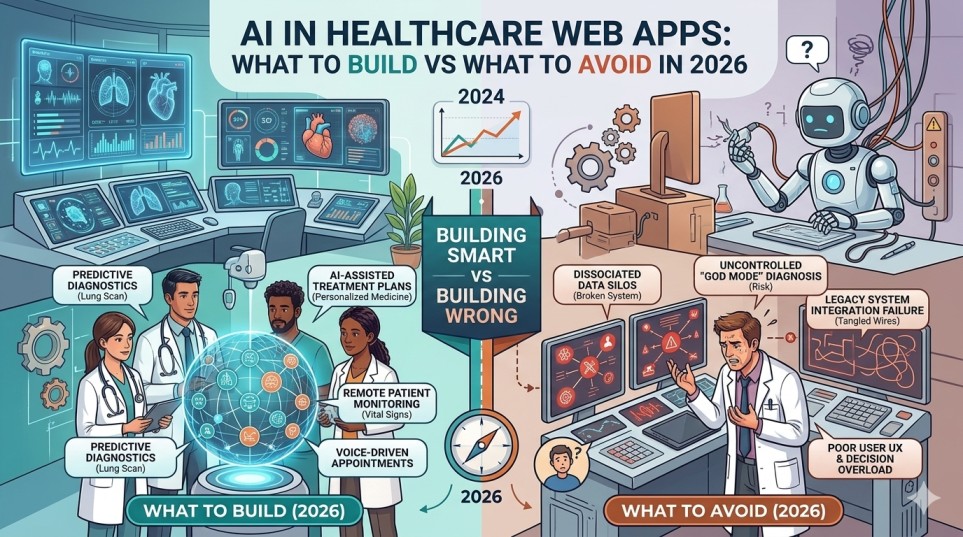

Healthcare AI is growing fast,and so is the exposure that comes with it. The healthcare AI market stood at $21.66 billion in 2025 and is projected to reach $148.4 billion by 2029. That kind of growth attracts investment, raises product ambitions, and shortens development timelines. It also multiplies the ways a development team can get things badly wrong.

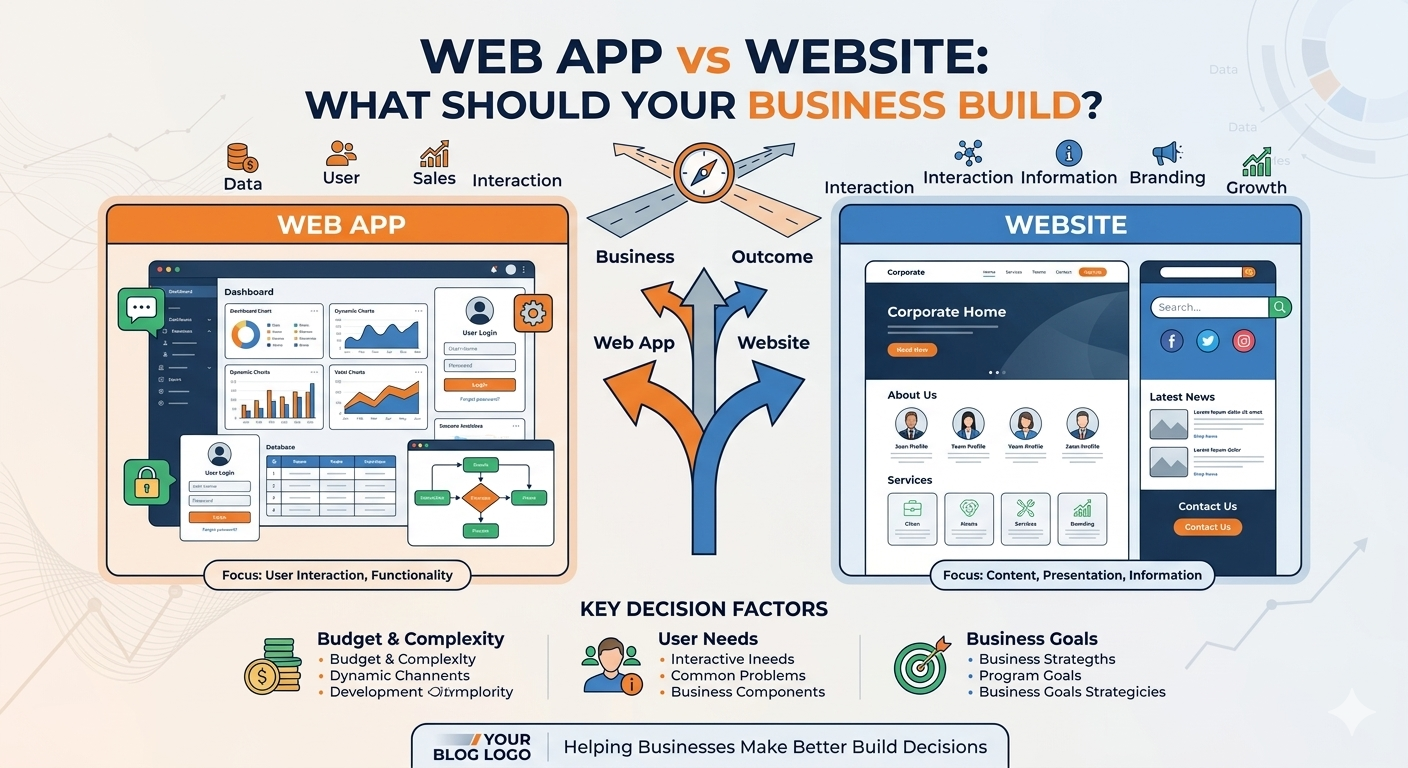

This is not an article about doing the right thing. It is about what happens to product timelines, enterprise deals, and company valuations when a developer team builds a healthcare AI app without treating data privacy as a core architecture decision,not an afterthought, not a legal team problem, and not something to retrofit after the first hospital pilot.

The stakes are financial and structural. Healthcare breaches cost an average of $7.42 million per incident in the U.S. in 2025,more than any other industry, and it has held that position for 14 consecutive years. The average healthcare breach now costs $9.8 million according to IBM Security’s 2024 Cost of a Data Breach Report,double the financial sector and 2.5 times the cross-industry mean. And that number does not include the lost contracts, the failed vendor assessments, and the enterprise clients who simply never come back.

The Compliance Gap Is Now a Revenue Gate

Here is the problem development teams keep running into: they build a functional product, they get interest from a health system or payer, and then they hit a vendor risk assessment. The procurement team asks for evidence of HIPAA compliance, BAA coverage across all vendors, audit logging, and encryption documentation. If the team cannot produce it, the deal does not move forward,regardless of how strong the product is.

For many first-time founders and development teams, the reality is that healthcare clients,from hospitals to insurance providers,will not even consider a product unless it is already compliant. Passing a vendor risk assessment is often the first gate to closing a deal. Waiting until “later” to address compliance can mean costly rework, missed deadlines, and lost opportunities before a product ever reaches patients.

The technical debt compounds quickly. A chatbot that collects symptoms. An LLM API call that processes a patient query. An analytics pixel embedded in a provider-facing dashboard. Each of these can become an unauthorized disclosure of Protected Health Information (PHI) if the vendor on the other end has not signed a Business Associate Agreement (BAA). One company had a BAA with Anthropic but not OpenAI. A developer tried OpenAI for a task where it performed better, not realizing the BAA requirement. They found out when the developer mentioned it in standup. The fix was straightforward, but it required disclosure to the compliance team and documentation of the incident.

That is a best-case scenario. In many cases, teams discover the gap during due diligence for a funding round or an enterprise contract,and it unravels months of commercial progress.

In January 2025, the HHS Office for Civil Rights proposed the first major update to the HIPAA Security Rule in 20 years, citing the rise in ransomware and the need for stronger cybersecurity. For organizations deploying AI in healthcare, these changes are especially significant,they remove the distinction between required and addressable safeguards and introduce stricter expectations for risk management, encryption, and resilience. That is not a distant regulatory shift. It is already affecting how healthcare procurement teams evaluate vendors today.

How Leading Teams Are Building It Right

Development firms that operate repeatedly in the healthcare space,including GeekyAnts, which has shipped HIPAA and GDPR-compliant products across telemedicine, mental health, and EHR integration,have moved toward treating compliance as a design constraint rather than a compliance layer. That distinction matters more than it sounds. A design constraint shapes architecture from day one. A compliance layer gets bolted on at the end and is frequently incomplete.

The same pattern shows up at larger scale. Microsoft’s Azure OpenAI Service, Google Health, and Epic have all invested heavily in compliance-ready infrastructure precisely because their healthcare clients demand it before any commercial conversation begins. Azure OpenAI, for instance, can be deployed within Microsoft’s compliance boundary and covered under a BAA when configured appropriately,a detail that shapes which AI vendors developers can legally use for PHI-touching features.

The surprising thing about HIPAA compliance with AI is not that it is hard,it is that most teams are solving the wrong problem. They think the challenge is picking the right vendor or writing the perfect policy. The real challenge is understanding where patient data actually shows up in the development workflow. A developer copies a database query from production to debug something. Another pastes an API response into a prompt to ask for help. In healthcare, every one of those actions could be a HIPAA violation.

The practical implication: compliance in AI healthcare apps is not a documentation exercise. It is an infrastructure and workflow discipline.

Here are the non-negotiable technical requirements development teams must implement before any PHI touches an AI system:

- BAA coverage for every vendor in the data path,not just the primary LLM provider, but logging infrastructure, cloud storage, analytics tools, and any third-party SDK that processes or stores user data.

- AES-256 encryption at rest and TLS/SSL in transit,HIPAA mandates this; vague “industry-standard encryption” language in a vendor contract is not sufficient.

- Audit logging with tamper-resistant storage,every interaction with PHI must be captured, retained per your policy, and exportable for OCR audits.

- Role-Based Access Control (RBAC) and Multi-Factor Authentication (MFA),particularly critical in administrative interfaces and model training environments.

- PHI de-identification for model training data,using either HIPAA’s Safe Harbor method (removing 18 specific identifiers) or Expert Determination, both of which must be validated for the specific AI use case.

Successful AI implementation requires integrating security and compliance measures from the beginning of development rather than treating them as a later-stage concern. That is the operational discipline that separates teams that close enterprise healthcare deals from teams that stall in procurement.

Compliance Is the Competitive Moat

The breach data makes this concrete. In 2024, 79% of healthcare providers were targeted by emails involving hacking incidents and unauthorized access, according to the U.S. Department of Health and Human Services. Healthcare is the top target for ransomware attackers, accounting for 17% of ransomware attacks across all industries, with average ransom demands reaching $7 million. Healthcare clients know this. They evaluate every vendor through that lens.

The developer team that treats privacy architecture as a first-class engineering concern,not a legal obligation to be satisfied at the end,is the team that moves faster through procurement, closes enterprise deals, and builds a product that survives regulatory audits. Healthcare companies that implement HIPAA-compliant AI tools now will have working systems when regulators tighten enforcement. Companies that wait will be scrambling to retrofit compliance onto tools they have been using incorrectly for years.

The market is large enough that this is not a philosophical debate. It is a business decision. Compliance is not the ceiling on what an AI healthcare product can be. For the teams building seriously, it is the foundation everything else sits on.