The decisions your team makes in the next 12 months will either create a durable competitive edge,or a compliance liability that takes years to unwind.

The money flowing into healthcare AI right now is real. So is the pressure on product and engineering leaders to show results from it. The global AI in healthcare market sat at roughly $39 billion in 2025 and is projected to cross $56 billion in 2026 alone, growing at a CAGR above 40% through 2034 (Fortune Business Insights). Decision-makers are not short on ambition. What they are short on is a clear framework for where AI actually delivers in a web application,and where it quietly creates risk that surfaces at the worst possible time.

This piece is not about the promise of healthcare AI. It is about the practical decisions your team needs to make before the next sprint planning session.

The Gap Between the Pitch Deck and the Product

Most healthcare technology teams are not starting from zero. They have existing EHR integrations, patient portals, or telehealth modules that were built under one set of constraints and are now expected to carry AI features they were never designed for.

The challenge is not the AI models themselves. The challenge is that 97% of hospital data goes unused, and healthcare data breaches now cost an average of $7.42 million per incident,with detection taking an average of 279 days, five weeks longer than any other industry (IBM Cost of a Data Breach, 2025 / Knowi Healthcare Analytics Report, 2026). Teams are being asked to build faster on infrastructure that was built to minimize change.

At the same time, shadow AI has become a genuine operational risk. Roughly 40% of hospitals now have unmanaged, unauthorized AI tool usage by staff, and 57% of healthcare professionals have used AI tools that bypass official IT oversight (Wolters Kluwer, 2026). When a nurse pastes clinical notes into a public LLM to draft a patient summary, that is not a technology failure. It is a product gap. The approved tools are too slow, too clunky, or too incomplete. Staff route around them.

The product decisions that matter in 2026 are the ones that close that gap before regulators close it for you.

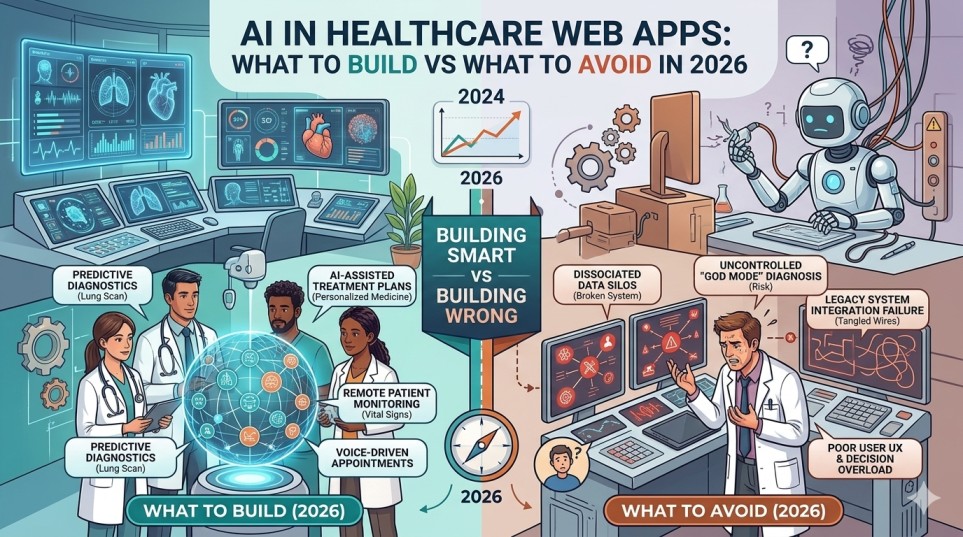

What to Build: Four AI Features With Demonstrable ROI

The features below are not speculative. They reflect where healthcare organizations are already realizing returns, where the regulatory frameworks are mature enough to build on, and where real user behavior is pulling demand.

- AI-assisted clinical documentation and ambient scribing. Administrative burden is one of the sharpest pain points in clinical settings. In 2025, 35% of healthcare professionals reported spending more time on administrative tasks than on direct patient care (Vention Teams, 2025). AI-powered documentation tools,systems that listen to a clinical encounter and generate structured notes,are already reducing this gap at scale. Organizations using them report meaningful reductions in after-hours charting, a leading predictor of physician burnout. This is the category where ROI is most directly measurable and where vendor infrastructure (Azure OpenAI with signed BAAs, Google Cloud Healthcare API) is mature enough to build on safely.

- Predictive risk stratification in patient-facing portals. Using machine learning to surface high-risk patients before they deteriorate is not new, but embedding it into the web app layer,making it visible to care coordinators in their existing workflow rather than in a separate analytics tool,is where teams consistently under-invest. The ROI on healthcare AI overall averages $3.20 for every $1 invested, with returns typically realized within 14 months (DemandSage, 2025). Risk stratification is one of the primary drivers of that number.

- Interoperability middleware powered by NLP. Most FHIR integration work is still done manually, with engineers hand-mapping fields between systems. NLP-assisted data normalization,tools that can parse unstructured clinical text and map it to structured FHIR resources,eliminates significant manual overhead and reduces the error rate in data exchange. For any organization managing multiple EHR integrations, this is where AI earns its budget.

- Intelligent scheduling and prior authorization automation. Prior authorization alone costs the U.S. healthcare system an estimated $31 billion annually in administrative overhead (AMA, 2023). Web applications that use AI to pre-populate auth requests, predict approval likelihood, and flag missing documentation before submission have a short payback period and a clear success metric.

What to Avoid: The Mistakes That Look Like Strategy

The most expensive healthcare AI mistakes in 2026 are not technical. They are architectural and governance decisions made early in a project that create compounding liability.

Avoid building on public LLMs without a signed Business Associate Agreement. This is the single most common compliance gap in healthcare AI development right now. Public versions of tools like ChatGPT, Gemini, and Claude do not sign BAAs and may use input data for model training or improvement (Knack, 2025). Any use of these tools in a workflow that touches protected health information is a HIPAA violation,regardless of how the feature is framed internally. The risk is not hypothetical. In 2025, Comstar LLC faced regulatory action after a ransomware event compromised the PHI of over 585,000 individuals, with investigators finding the organization had failed to conduct a HIPAA-compliant risk analysis on its AI-enhanced systems (Censinet, 2025).

Avoid diagnostic AI features that lack a human-in-the-loop checkpoint. The diagnostic accuracy of generative AI models across a meta-analysis of 83 studies was 52.1%,comparable to non-expert physicians but significantly below expert-level accuracy (DemandSage, 2025). Building a feature that positions AI output as a clinical decision rather than a clinical input creates both liability and patient safety risk. Regulators and enterprise buyers are paying attention to this distinction.

Avoid bolting compliance onto a finished product. The January 2025 proposed update to the HIPAA Security Rule,the first major revision in 20 years,removes the distinction between required and addressable safeguards and introduces stricter expectations around encryption, risk management, and incident response specifically for AI systems (HHS Office for Civil Rights, 2025). Architecture decisions made at the beginning of a project are far cheaper to change than compliance remediation at the end of one. Building a feature that is “HIPAA-eligible” is not the same as building one that is HIPAA-compliant. The configuration, logging, access control, and audit trail all matter.

Avoid the AI governance gap. According to IBM’s 2025 data, 63% of organizations have no AI governance policies in place, and 13% reported breaches of AI models or applications,of which 97% lacked proper AI access controls (Knowi, 2026). A healthcare web app without an AI governance layer is not a product. It is a liability waiting to surface.

How Leading Teams Are Navigating This

The companies making real progress in healthcare AI web apps share a consistent pattern: they treat compliance infrastructure as a product feature, not an afterthought.

Microsoft and Google Cloud have invested heavily in HIPAA-eligible AI infrastructure precisely because enterprise healthcare buyers will not sign contracts without it. Epic has taken a measured approach to embedding AI in its clinical workflows, focusing on documentation and decision support rather than autonomous diagnosis,a posture that reflects where regulatory tolerance currently sits.

In the development services space, firms like GeekyAnts have been working on HIPAA-compliant healthcare web apps that span patient portals, telemedicine platforms, and EHR integrations. Their publicly stated approach involves building compliance into architecture from the first sprint rather than auditing for it before launch,a pattern that matches what the data suggests works. The firm has also noted publicly that healthcare AI initiatives most often fail not at the model level but at data infrastructure and physician adoption (GeekyAnts, 2025).

Cognizant and Google Cloud launched a joint set of healthcare LLM solutions in 2024 that focus specifically on administrative process redesign,not clinical diagnosis. NVIDIA’s 2025 partnership with Mayo Clinic, IQVIA, and Illumina targets drug discovery and genomic research, areas where AI model performance is measurable and the regulatory pathway is clearer.

The pattern is consistent: the teams doing this well are building narrow, well-governed AI features with defined success metrics, not broad AI layers with diffuse accountability.

The Actual Decision Your Team Needs to Make

The question for 2026 is not whether your healthcare web application should include AI. That decision is already made by market pressure. The question is whether your team builds AI features in a way that compounds value or compounds risk.

The budget pressure to ship fast is real. So is the regulatory pressure building around AI in clinical contexts. The teams that will have the clearest ROI story in 18 months are the ones that resisted the temptation to treat AI as a product skin and instead embedded it into specific workflows with measurable outcomes, signed the right agreements before writing the first line of code, and built governance policies before staff found workarounds.

That is not a conservative strategy. It is the only strategy that compounds.

Frequently Asked Questions

What is the biggest compliance risk when building an AI feature in a healthcare web app in 2026?

The most common,and most costly,mistake is integrating a public LLM or AI service without a signed Business Associate Agreement. Under HIPAA, any vendor that creates, receives, maintains, or transmits protected health information on your behalf must sign a BAA. Without one, the integration itself constitutes a violation, regardless of intent or technical configuration. The January 2025 proposed update to the HIPAA Security Rule is expected to make enforcement in this area more stringent, not less.

Which AI features have the clearest ROI for healthcare web applications right now?

Clinical documentation automation and ambient scribing consistently show the shortest payback period. Prior authorization automation and intelligent scheduling are close behind. Predictive risk stratification has strong ROI but requires more mature data infrastructure to implement correctly. Avoid building for diagnostic autonomy,the regulatory pathway is long and the liability exposure is significant.

How is shadow AI affecting healthcare organizations, and what does it mean for product teams?

Shadow AI,unauthorized use of AI tools by staff without IT oversight,is present in roughly 40% of hospitals (Wolters Kluwer, 2026). It adds an average of $670,000 to breach costs and is linked to a 240% year-over-year increase in unauthorized access incidents (IBM, 2025). For product teams, this is a signal: if staff are routing around approved tools, the approved tools are not solving the actual problem. The fix is building sanctioned tools that are fast and capable enough that workarounds are unnecessary.

What should a healthcare web app development team do before selecting an AI vendor?

Before any vendor selection, confirm three things: whether the vendor will sign a BAA, which specific features are covered by that BAA, and whether any PHI leaves the compliant environment through model training, third-party integrations, or logging. “HIPAA-eligible” is a marketing term. HIPAA-compliant is a configuration and contract requirement. Also confirm the vendor’s approach to data retention and model training on customer data,this is frequently where compliance gaps appear.

How are HIPAA Security Rule changes in 2025 affecting AI development decisions?

The proposed January 2025 update to the HIPAA Security Rule removes the historical distinction between “required” and “addressable” implementation specifications. For AI systems, this means stricter expectations around encryption at rest and in transit, risk management documentation, and incident response planning. Any healthcare web application being architected in 2026 should be built to meet these proposed standards, not the 2003-era baseline,given that the update is expected to finalize and the regulatory direction is clear regardless of exact timing.